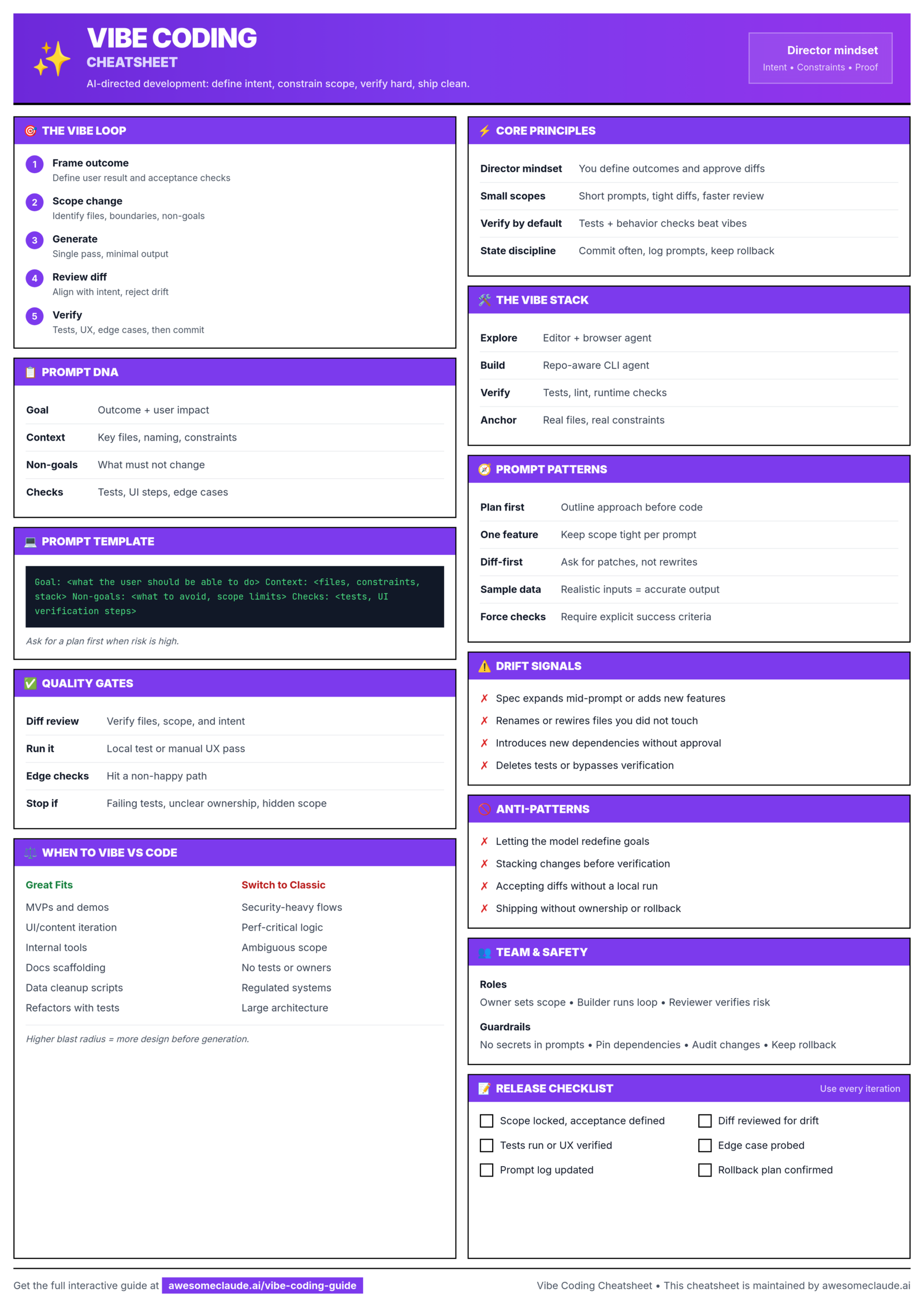

Here’s the essence of the Vibe Coding Guide:

Vibe coding is AI-assisted software development where you act more like a director than a line-by-line coder: you define the goal, constraints, acceptance criteria, and boundaries; the AI drafts code; you review, test, and decide what ships. The guide stresses that it is not no-code and that the human remains accountable for architecture, correctness, security, and maintainability. (Awesome Claude)

The core workflow is a loop:

- Frame the outcome

- Scope the change

- Generate with AI

- Do a “vibe check” in the app

- Run objective checks such as diffs, tests, performance, and security

- Commit, document, and pick the next iteration (Awesome Claude)

The guide’s strongest advice is to keep scope small. One feature per prompt, clear file boundaries, explicit non-goals, and diff-first requests reduce model drift and make review easier. It recommends prompts that include goal, constraints, context, allowed files, acceptance checks, non-goals, and the expected deliverable. (Awesome Claude)

Its quality bar is: read the diff, run the app, run relevant tests, check edge cases, verify dependencies, and keep a rollback path. The guide warns against trusting AI-generated code just because it looks plausible. Common failure modes include hallucinated APIs, oversized rewrites, hidden regressions, and drift from the original task. (Awesome Claude)

A practical prompt template from the guide would look like:

Role: You are maintaining this repo.

Goal: <what the user should be able to do>

Constraints: <stack, libraries, style rules>

Context: <files, endpoints, data models>

Files: <what can change / what must not>

Acceptance: <tests, UI checks, edge cases>

Non-goals: <explicitly out of scope>

Deliverable: <patches + brief summary>

My takeaway: the guide is really about engineering discipline around AI coding. It is pro-AI, but not “let the model do whatever.” The ideal pattern is: use AI for speed, scaffolding, UI iteration, bug fixes, and small refactors; use human judgment for architecture, security, production risk, and final verification.

Leave a Reply