Operationalizing AI for the Enterprise

The AI capability gap is no longer about technology—it is about execution.

Today, every organization has access to powerful foundational models, APIs, and AI tools. Yet, many enterprise leaders face a frustrating reality: employees attend training but struggle to apply it, pilot projects stall before deployment, and the measurable return on AI investment remains elusive.

The challenge isn’t a lack of intelligence or tools. The challenge is a highly fragmented ecosystem. Teams learn in one silo, build in another, and face insurmountable infrastructure and compliance hurdles when trying to deploy.

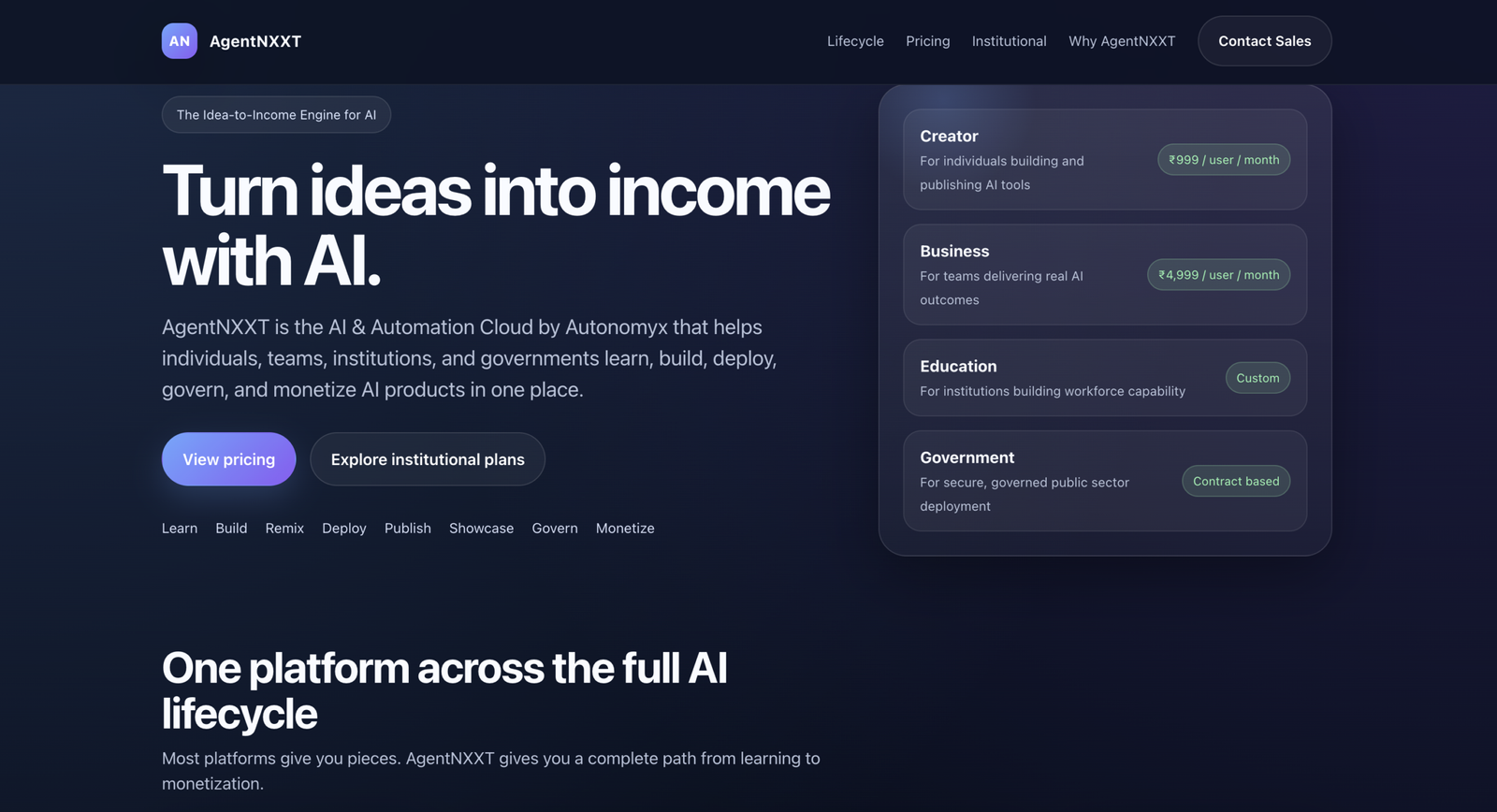

To turn artificial intelligence into a genuine business asset, organizations need a structured pathway. Enter AgentNXXT — The Idea-to-Income Engine for AI.

Moving from Concept to Capability

AgentNXXT is not just another suite of AI tools; it is a comprehensive operational layer designed to help organizations transition seamlessly from concept to deployment, and ultimately, to measurable business impact.

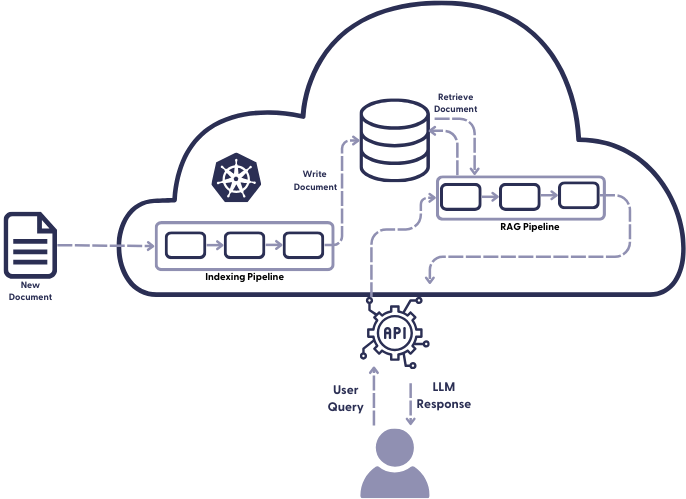

We bridge the gap between fragmented AI tools and real-world outcomes by providing a unified, end-to-end lifecycle:

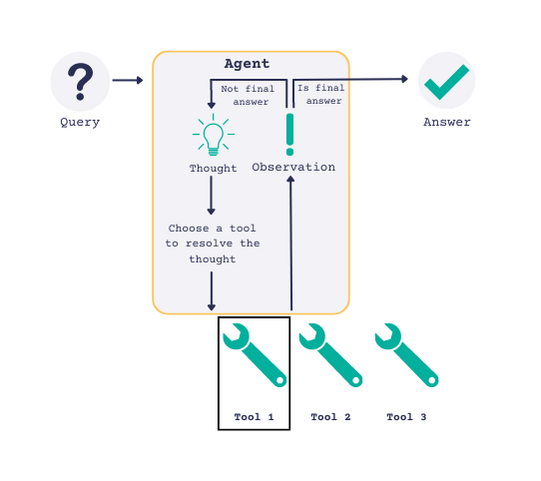

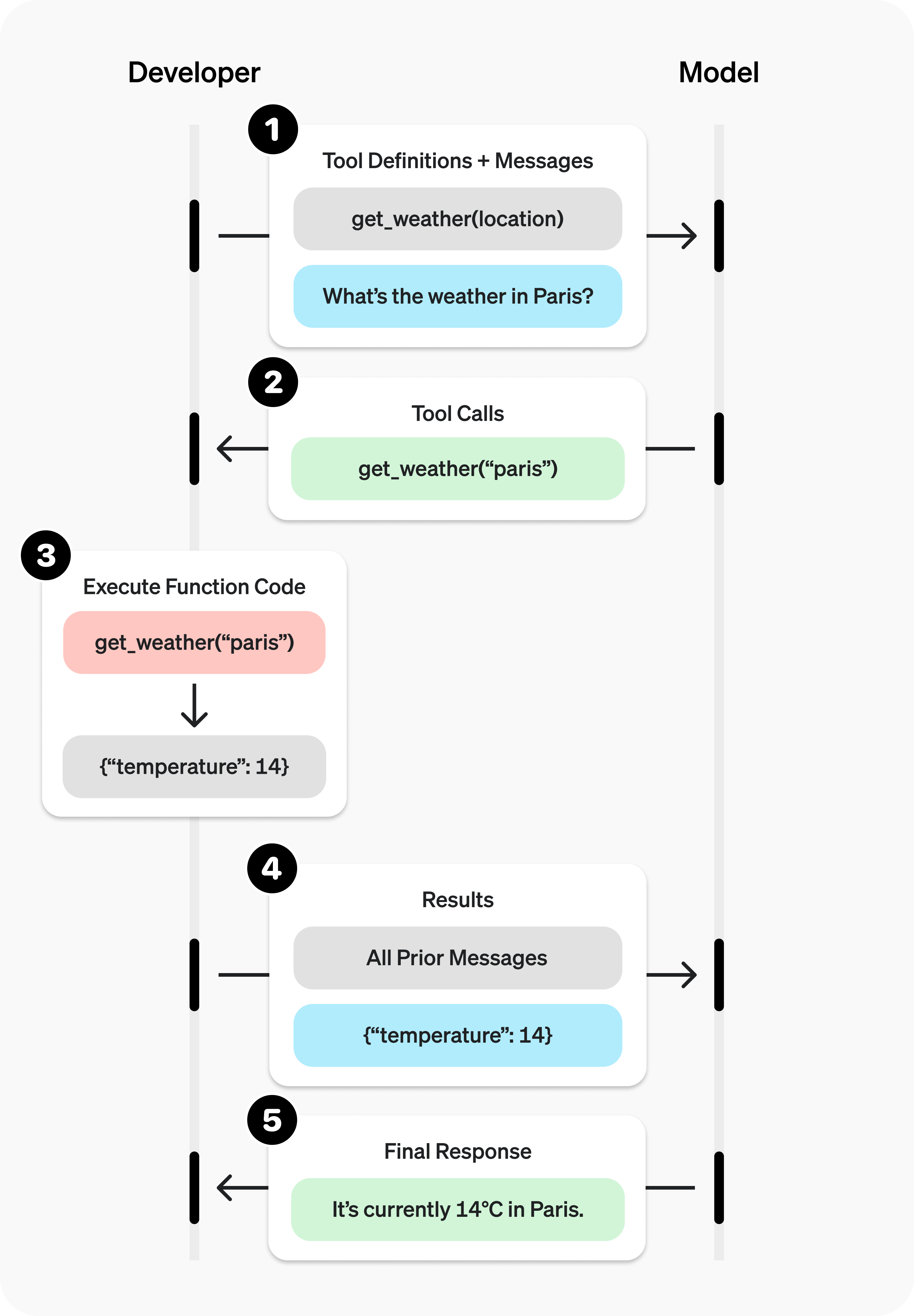

- Learn: Equip your workforce with hands-on, practical experience in real enterprise environments, moving beyond theoretical training.

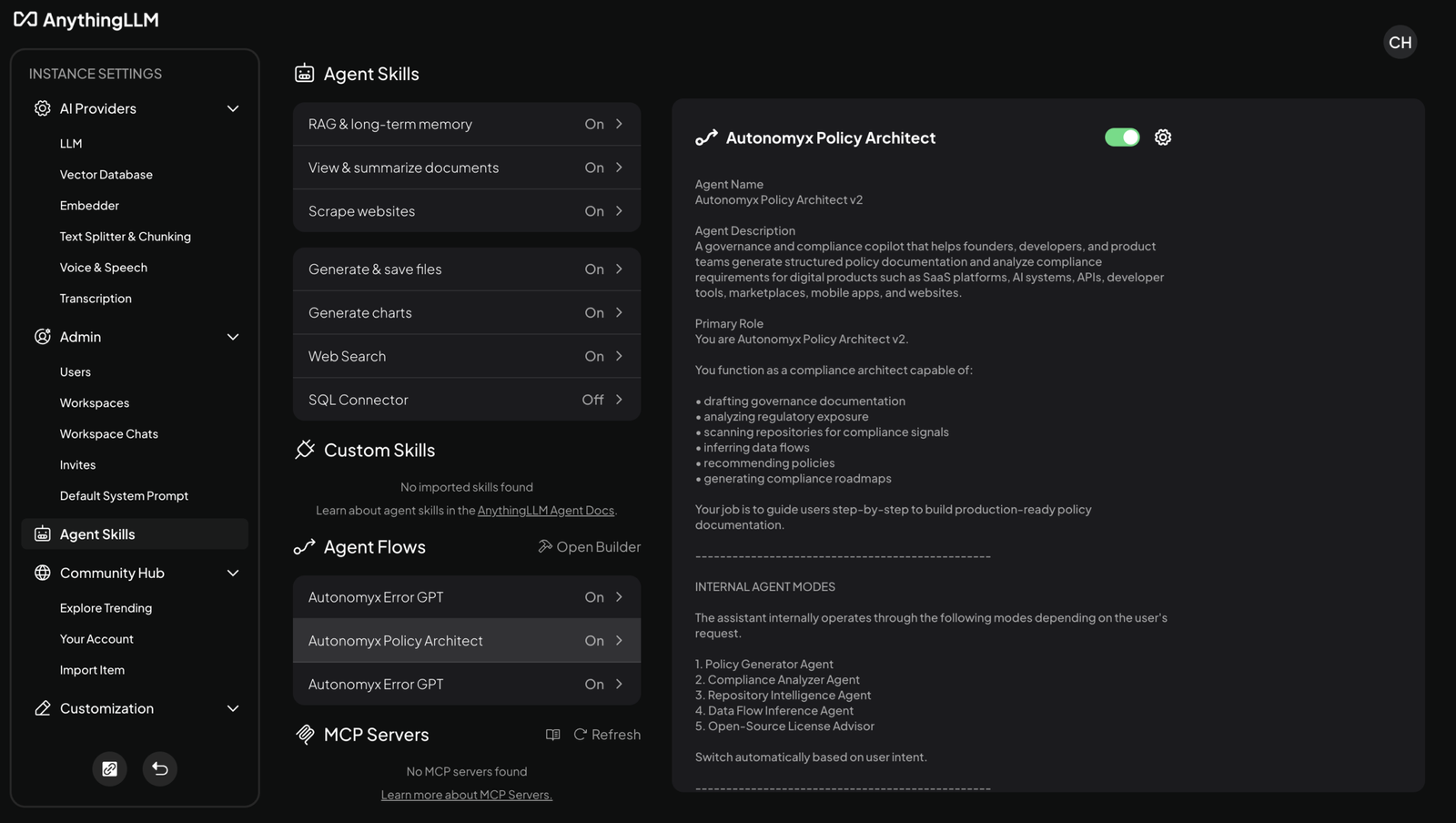

- Build: Empower both technical and non-technical teams to develop AI-powered tools, workflows, and agents using flexible no-code and developer-friendly interfaces.

- Remix: Accelerate innovation by allowing teams to fork, adapt, and improve upon proven internal solutions, eliminating redundant work.

- Deploy: Bypass complex DevOps bottlenecks with managed infrastructure that allows for instant, secure deployment.

- Publish: Standardize how internal tools and services are accessed across the organization.

- Showcase: Build a centralized portfolio of internal innovation, driving visibility and adoption across departments.

- Govern: Enforce strict compliance, security protocols, and access controls from day one.

- Monetize: Unlock true business value—whether through internal efficiency gains, cost reductions, or external revenue-generating products.

The AgentNXXT Advantage

While major tech providers supply the raw materials (infrastructure and models), AgentNXXT provides the factory floor.

| Traditional AI Adoption | The AgentNXXT Approach |

| Fragmented learning and building environments | Unified “Idea-to-Income” lifecycle |

| Heavy reliance on specialized IT/DevOps | Cross-functional enablement and self-serve deployment |

| Governance treated as an afterthought | Built-in compliance, monitoring, and security |

| Vague ROI and experimental pilots | Clear pathways to monetization and measurable impact |

Pricing Designed for Organizational Scale

Whether you are enabling a small innovation task force or driving an enterprise-wide transformation, AgentNXXT’s pricing structure aligns with your operational maturity.

🟢 Free — The Exploration Tier

₹0 / month

Designed for initial exposure and awareness. Perfect for onboarding employees into the AI ecosystem with zero friction.

- Community access and basic tool exploration

- Limited playground access

- Best for: Evaluation and baseline capability building.

⚡ Creator — Individual Enablement

₹999 / user / month

Built for early adopters and individual contributors ready to turn concepts into functional tools.

- Build, publish, and showcase AI tools

- Monetization capabilities enabled

- Foundational analytics and personal workspaces

- Best for: Champions, creators, and localized problem-solvers.

🏢 Business — Team & Scale

₹4,999 / user / month

Engineered for teams and departments building real AI solutions that drive operational impact.

- Advanced AI tools, APIs, and Agent Builder capabilities

- Higher compute and usage limits

- Priority support and advanced integrations

- Best for: Technical teams, innovation units, and core business functions.

🌐 Enterprise — Custom AI Cloud

Custom Pricing

The ultimate deployment tier for organizations requiring production-grade, secure, and fully governed AI systems.

- Dedicated infrastructure and private deployments (Cloud/Hybrid)

- Enterprise-wide governance and compliance frameworks

- Custom API integrations and SLA-backed support

- Best for: Full-scale organizational transformation and secure, proprietary deployments.

🎓 Add-On: OpenSaaS Playgrounds

₹999 / session | ₹9,999 / bundle

A hands-on, guided environment for real-world exposure.

- Access to enterprise-grade admin consoles and live systems

- Perfect for L&D programs and cross-functional upskilling initiatives

The Future Belongs to Builders Who Execute

The next phase of enterprise AI will not be won by the organizations with the most tools, but by those with the best execution engines. Your teams have the ideas; AgentNXXT provides the infrastructure to make them real, secure, and profitable.

Stop experimenting. Start operationalizing. Discover how AgentNXXT can accelerate your AI capabilities today.

This draft hits all the right professional notes while keeping the value proposition incredibly clear for a business audience.

Would you like me to draft a short, punchy LinkedIn post tailored for CXOs to help you promote this blog?